How to Save 2 Hours a Day with ChatGPT & GitHub Copilot

Who doesn’t know the feeling: hours slip away writing boring boilerplate code, documentation, or doing the same routine tasks over and over. But this is exactly where ChatGPT and GitHub Copilot can help. These AI tools take over repetitive programming work, letting you focus on the truly creative tasks. Many developers already use such tools daily. According to a recent survey, 85% regularly work with AI support, and 9 out of 10 save at least an hour per week as a result. One in five even reported saving over 8 hours per week (more than a full workday) thanks to AI helpers. Used correctly, you too can save around 2 hours per day.

Goal of this article: We’ll show you concrete AI workflows to make your developer day more efficient. From the types of tasks (generating, explaining, reviewing code) to effective prompt patterns, code generation with tests and docs, code review with safety nets, and the limitations of these tools. After this blog post, you’ll know exactly how to use ChatGPT and Copilot optimally. Even if you have little experience with AI: don’t worry, we’ll guide you step by step. Let’s get started!

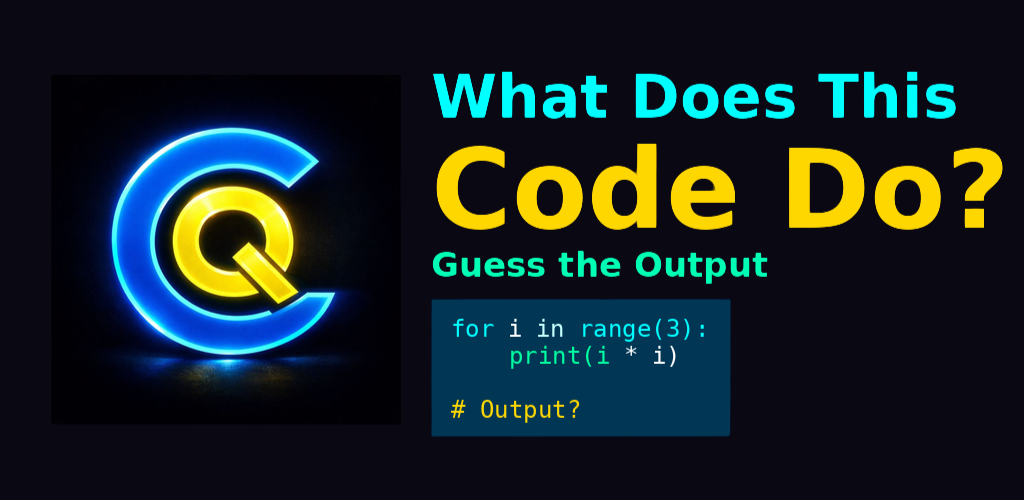

Test your own knowledge

AI can save us a lot of time and effort, but we shouldn't neglect our own knowledge. Keep your programming skills sharp with Cyberskamp Quiz!

Discover Cyberskamp Quiz1. Types of Tasks: Generate, Explain, Review

What can ChatGPT and GitHub Copilot be used for in a developer’s daily life? Basically, AI support falls into three categories: generating, explaining, and reviewing. Let’s take a closer look at these task types.

Generating

Here, the AI takes over writing code or text for you. This includes everything from boilerplate code and repetitive functions to config files, tests, or documentation. These monotonous tasks are exactly what many developers prefer to delegate to AI. For example, ChatGPT can generate entire code blocks or scripts on demand, whether it’s a database model, an API endpoint, or a Dockerfile for your service. GitHub Copilot works more in the background: as you write code, it automatically suggests suitable code completions (often entire lines or functions). This way, you get a basic solution in seconds, which you can then adapt. By letting the AI handle these routines, you have more time for the interesting parts of the job, like architecture design or tricky algorithms.

Explaining

The AI as mentor and documenter. ChatGPT can explain or summarize code in an understandable way. If you don’t understand a foreign code snippet, just paste it into ChatGPT and ask for a simple explanation. In seconds, you’ll get a breakdown of what the code does and why. This is incredibly helpful not only for beginners but also saves experienced developers time when getting up to speed with unfamiliar code. ChatGPT can also summarize changes (“Summarize the latest commits”) or generate documentation texts. Many programmers already use AI to have documentation or code comments written by the AI. The result: you understand the code better and have less writing to do yourself.

Reviewing

Two (digital) eyes see more than two human ones. ChatGPT can review your code, scan for errors, and make suggestions for improvement. For example, you can ask the AI assistant for a code review: “Look at the following code and point out possible bugs or security issues.” The model will highlight typical problems (e.g., unchecked inputs, inefficient loops) and often suggest concrete improvements. AI can also help with debugging: describe an error message or incorrect behavior to ChatGPT, and it will often provide helpful hints about the cause. While developers understandably like to keep control over tricky problems, the AI is invaluable as a smart helper for brainstorming. Finally, ChatGPT can even generate tests to check your code, but more on that later. The important thing is: the AI provides suggestions, but you as a human decide what to implement. This human-in-the-loop collaboration ensures that quality and sense are maintained in the end.

2. Prompt Patterns: Role, Constraints, Tests, Style

The key to useful ChatGPT answers lies in the right input. This input text, the command to the AI, is called a prompt. How do you phrase prompts so that ChatGPT delivers exactly what you need, such as error-free code instead of vague tips? Proven prompt patterns help here. Four elements have proven effective:

Assign a role: Put ChatGPT in a specific role or persona so the answers have the desired tone and expertise. For example, you could start with “You are an experienced senior software developer…”. The model will then respond with the expertise and language style of a professional developer. Similarly, you can specify roles like “security expert,” “technical writer,” or “tester,” depending on what you need.

Constraints (requirements and boundaries): Don’t hesitate to give the AI clear instructions and boundaries. What must be included, what must not? For example, you could specify: “Use only built-in Python libraries” or “The code must not make external API calls.” Style requirements also belong here (more on that in a moment). Such constraints deliberately narrow the solution space and prevent ChatGPT from drifting off-topic or doing unwanted things. In one example, it was specified that a marketing plan must not mention competitors, and ChatGPT complied. Similarly, in technical prompts, you can specify not to use certain terms or to follow certain patterns.

Include tests or examples: A trick for more precise results is to give ChatGPT small test tasks or examples. If you want a generated function to meet certain requirements, you could describe a mini-test: “Write function X. For input Y, the result should be Z.” The AI will then try to produce code that passes this test. You can also specify example inputs/outputs (“Example: for n=5 the output should be 120”) or ask ChatGPT to create test cases itself. This way, you immediately check the correctness of the solution. Tests in the prompt act as additional guardrails and quality control during code generation.

Style and format: Finally, you should define how the answer should be formatted. Do you want pure code as an answer? Then write, for example, “Output only the final code, no explanations.” Or do you need an explanatory text, perhaps in a specific language? Then state that clearly. You can also specify the coding style, such as “in PEP8 style” or “use functional programming”. The same goes for language answers: tone (casual vs. professional), length (short summary vs. detailed report), and structure (bullet points, table, step-by-step) can all be controlled in the prompt. The more specific you are, the more likely the answer will match your expectations.

You can combine these patterns—role, constraints, tests, style—as needed. A good prompt might be: “Act as an experienced Python developer. Explain in a friendly, simple tone what the following code snippet does. Constraint: No technical terms, explain so a beginner can understand. Format: Answer in no more than 5 sentences.” With such clear instructions, the chance of a helpful, targeted answer increases enormously. Remember: A precise prompt saves you time in the end because you have to do less follow-up or corrections.

3. Code Generation: Components, Tests, Docs – in Minutes Instead of Hours

Let’s get practical: how exactly do we save time? Let’s look at typical workflows where ChatGPT and Copilot can save you hours every day. Three important areas of code generation are component code, tests, and documentation.

Generating Components and Functions

Imagine you need to build a new component, such as a data processing function, an API endpoint, or a piece of frontend UI. Normally, you’d start from scratch. With AI support, you get a draft in seconds. Briefly describe to ChatGPT what the component should do (and any constraints), and you’ll get a code suggestion. Example: “Write a Python function convert_to_csv(data) that takes a list of dictionaries and outputs CSV to stdout.” ChatGPT will then provide you with working code including file handling. Of course, you need to check and possibly adapt the suggestion, but the groundwork is done. GitHub Copilot, on the other hand, helps as you type the first lines: if you write def convert_to_csv(data): and hit Enter, Copilot might immediately suggest the rest of the function code. These autocompletions are based on learned patterns from countless similar functions. Especially for boilerplate code (repetitive structures like logging, setters/getters, parser code), it’s best to let the AI spit it out completely. This is much faster than typing by hand and helps you avoid careless mistakes. Studies show that developers complete many tasks noticeably faster with Copilot, especially the repetitive ones. The motto is: The AI types, you think. This lets you focus on architecture and tricky logic while ChatGPT & Copilot handle the monotonous part.

Generating Tests

Writing tests takes time. Time many of us would like to save. Luckily, AI tools are perfect test machines. You can ask ChatGPT to create unit tests for a given code: “Write unit tests (with pytest) for the following function.” If you prompt cleverly (e.g., “Include normal cases, edge cases, and error cases”), you’ll get a whole bundle of test cases in seconds. According to a Real Python report, ChatGPT significantly reduces the time needed to write good tests, generating complete test suites based on your code or specification and even covering edge cases. This efficiency lets you focus on the actual application logic while the AI prepares the test framework code. ChatGPT often thinks of test scenarios you might only consider later, improving test coverage. Of course, don’t trust blindly—you should quickly review the generated tests. But in most cases, they provide an excellent starting point that you only need to tweak slightly. Some developers even report that thanks to Copilot and ChatGPT, they write tests more often because the barrier is so much lower. More tests in less time is a clear productivity gain and a quality boost to boot.

Documentation and Comments

Hardly anyone enjoys writing documentation—except maybe AI bots. ChatGPT, on the other hand, loves to formulate text. So why not outsource routine documentation to the AI? Whether it’s docstrings for functions, class documentation, or even entire README sections: with the right instructions, ChatGPT spits out neatly formatted documentation text in seconds. For example, you can enter: “Write a docstring for the above function with description, parameters, and return value” and the assistant will generate something like this:

def add(a, b):

"""

Add two numbers together.

Args:

a (int): The first number.

b (int): The second number.

Returns:

int: The sum of the two numbers.

"""

return a + bAs you can see, you get a proper comment with no effort. You can also ask ChatGPT: “Create a short guide for installing and using my project XY.” and get a ready-made draft for the README. Many developers now delegate writing documentation texts to AI to make their own writing easier. The AI is surprisingly consistent and fast. The only important thing is to give it enough context (e.g., explain roughly what your project does) so the documentation is accurate. Overall, you can massively reduce tedious writing work. Instead of spending an hour typing docs, you generate them in 5 minutes with ChatGPT and just polish them a bit. Over time, this makes a huge difference.

4. Review and Safety Nets: Quality Assurance with AI

For all the enthusiasm about AI automation: never blindly trust the output. Code reviews and tests remain essential, especially when a large part of the code comes from an AI. See ChatGPT and Copilot as super-fast helpers, but you are still the quality manager. Here are some strategies to safeguard AI-generated code:

Manual Code Review

Always critically examine the code suggested by ChatGPT. Does it even run? Does it meet the requirements? Beware: AI code often looks plausible at first glance but can have hidden errors. Microsoft researchers found that Copilot sometimes delivers code that “looks right but isn’t.” So: compile/run the code and test the functionality. Pay special attention to edge cases and error handling. Does the code really do what it’s supposed to, even in unusual situations? It’s often worth asking ChatGPT directly for a review (AI checks AI): “Analyze the above code and point out possible issues.” Interestingly, the model sometimes finds its own mistakes or inaccuracies in the generated code and improves them. Still, this is no substitute for human judgment.

Static Code Analysis

Additionally, use automated tools like linters and static analysis tools. They act as a “safety net” by highlighting obvious problems, such as syntax errors, unused variables, type conflicts, or security vulnerabilities. If Copilot, for example, suggests code that uses outdated or unsafe practices, your linter (or SonarQube, ESLint, Pylint, etc.) will flag it. Such tools can quickly scan the AI code and filter out major blunders. This is especially helpful because AI models don’t have real project context and sometimes “forget” necessary import statements. In one example, ChatGPT generated seemingly working code for JSON processing but left out the important import json statement. A simple static analysis tool or your own trained eye would have caught that immediately. Bottom line: Trust, but verify. Let the AI write 100 lines of code, but then turn on your compiler, linter, and brain 😉

Run Automated Tests

We’ve seen that ChatGPT can generate tests—use them! Actually run the generated unit tests in your environment. If one fails, that’s a clear sign there’s still a bug in the code. In general, you should run AI code as early as possible and test it with real data. This validates whether everything works as expected. A potential downside of AI-generated code is that you might be tempted to think “It’ll be fine, the AI knows what it’s doing.” But that’s not the case. Large language models don’t have guaranteed correct world knowledge—they’re essentially guessing. So: test every feature (manually or automatically) before it goes into production. Interestingly, a study noted that reviewing AI answers sometimes takes so much time that the productivity gain is partially offset. You can counter this by establishing the right safety nets: automated checks, good test coverage, and clear coding standards by which you measure AI results. This way, you maintain the balance between speed and quality.

In summary: AI code should always be treated with a healthy dose of skepticism. With code review, linters, and tests, you ensure that “faster code” doesn’t mean “worse code.” Then you benefit maximally from the time savings without suffering from bugs.

5. Limitations & “Human-in-the-Loop”

Even though ChatGPT and Copilot can do impressive things, they’re not magic unicorns. There are clear limitations you should know about, and reasons why humans remain indispensable.

Limited Context and Logic Understanding

AI models don’t have a holistic understanding of your project. They generate output based on probabilities and patterns from training data, without real insight. Complex architectural decisions or deeply nested logic are beyond their grasp. This means that for demanding tasks, the suggestions quickly lose quality or are just plain wrong. Many developers report that they prefer to leave AI out of complex application logic or tough debugging, keeping control themselves. In fact, the JetBrains survey cites limited understanding of complex logic as the main concern with AI code. The tools often provide a starting point, but to build truly robust solutions, humans must stay in charge.

Inconsistent Code Quality

Sometimes AI code shines, sometimes it produces nonsense—the quality is not consistent. One of the biggest concerns among developers is exactly this fluctuation in AI code quality. You can never be 100% sure whether the next suggestion is brilliant or garbage without checking it (see previous chapter). Subtly wrong code is especially dangerous because it looks okay at first glance. This is where experience counts: an experienced developer will notice and correct it, while an inexperienced one might miss the error. So: Always use your common sense. The AI is an assistant, not an all-knowing programmer.

Data Privacy and Security Risks

An often overlooked point: as soon as you enter code or data into ChatGPT, this information potentially leaves your company. Confidential data or proprietary code should not be sent unfiltered to external AI services. Many companies are (rightly) cautious about using cloud AI for development. In addition, AI suggestions could have licensing implications if, for example, code from public repos is reused. The topic is complex, but the short version: only share sensitive things if you’re sure what happens to the AI data, and check suggestions for possible copyright issues. Data privacy and security are also among the top concerns with AI use. Copilot does have filters to avoid outputting long-known code passages verbatim, but some residual risk remains.

Impact on Your Skills

Finally, and especially important for inexperienced developers: don’t forget how to program just because the AI does so much for you! Some fear that too much reliance on Copilot & co will cause their own coding skills to atrophy over time. And indeed: if you spend months only accepting AI completions and hardly think for yourself, you’ll eventually lose your problem-solving practice. Be aware of this and use the AI as a companion, not as a replacement for your own effort. A nice approach is “AI as a teacher”: let ChatGPT suggest solutions, but also ask for explanations so you understand the code. That way, you even learn along the way. Also, don’t hesitate to try your own ideas—the AI is patient and helps you improve. Human-in-the-loop means that the human remains the instance that steers, validates, and creatively leads the process. The AI delivers, but you set the pace.

Conclusion on the Limitations

ChatGPT and GitHub Copilot are fantastic accelerators for routine tasks, but they don’t replace your head or your gut feeling. Keep complex decisions in human hands, critically review AI results, and make sure you stay in control of the code. There’s enormous potential in this human-AI partnership, as long as you know and compensate for the weaknesses.

6. Starter Prompt Library: Your AI Workflows to Try Out

To wrap up, we’ve put together a small prompt library for you. These examples help you get started right away and implement typical developer workflows with AI. Feel free to adapt the wording—they’re just starting points:

Generate code: “You are an experienced senior developer. Write a Python function calculate_statistics(data) that takes a list of numbers and returns minimum, maximum, and average as a dictionary. Focus on efficient implementation and clear structure. Comment the code well.”

What does this prompt do? It instructs ChatGPT to generate code in a specific language, gives the AI a role (senior developer), and clearly defines the task and output type. It also demands efficiency, structure, and comments, so you immediately get usable, well-commented code for this requirement.Explain code: “You are a software expert and tutor. Explain step by step in simple words what the following code does and why it was implemented this way. Avoid technical jargon: [Insert code here]”

Explanation: This prompt has ChatGPT take on the role of a patient teacher. Ideal for getting complex code explained in an understandable way. The instruction to avoid jargon ensures that even less experienced people can follow the explanation.Review code: “Take on the role of a code reviewer focused on best practices. Review the following Python code for errors, potential security issues, and optimization opportunities. Provide detailed feedback with concrete suggestions for improvement: [Insert code here]”

Explanation: Here, the AI becomes a strict reviewer. It will comb through the code and, for example, point out inefficient spots, possible bugs, or violations of style guidelines. You get a second pair of eyes that points out things you might have missed, including tips on how to improve.Debugging: “You are a very experienced developer for debugging. I have the following problem: [Error description or message]. Analyze the related code (see below) and explain the probable cause of the error. Then show me how to fix the error. Code: [Insert code here]”

Explanation: This prompt is worth its weight in gold when you’re facing a cryptic error. The AI will try to deduce the cause from the description and code (e.g., wrong variable initialization or off-by-one error) and provide a solution suggestion. While it doesn’t replace thorough debugging, it can often provide a crucial hint.Write tests: “Act as a test automation expert. Write unit tests with pytest for the following function. Consider typical use cases, edge cases, and error cases. Function: [Insert code here]”

Explanation: This will have ChatGPT generate a series of test functions to ensure the given function works correctly in various scenarios. The instruction covers both standard and special cases. You quickly get a solid test base without having to think through all the test ideas yourself.Generate documentation: “You are a technical writer. Write a short README introduction for my project XY. Briefly explain the purpose and main function of the project, show the steps for installation, and provide a small usage example. Use a friendly, clear language.”

Explanation: This prompt gives you a first draft of a project introduction, as is common in GitHub README files. ChatGPT formulates the benefit of your project, explains installation, and even gives an example—all in seconds. You can then enrich the text with project-specific details. The result: one less tedious task, time saved!

With this prompt library in hand, you can start experimenting right away. Copy a suitable prompt, adapt it to your use case, and let ChatGPT work for you. After a short time, you’ll develop a feel for which instructions yield good results.

Pro tip: Save successful prompts (e.g., in a personal collection) to reuse them again and again. Many developers build their own prompt library this way, just like reusable code snippets.

Conclusion

ChatGPT and GitHub Copilot can massively boost your productivity as a developer if you use them purposefully. Delegate boring routine jobs to the AI and use the time you gain for the exciting aspects of development. At the same time, stay alert, check results, and learn from the AI. This way, you get the best of both worlds: speed and quality. Good luck trying it out! 🚀

Curious? 👉 Try out my example prompts and subscribe to my newsletter to stay up to date. Have fun saving time! 😊

Comments

Please sign in to leave a comment.